Introduction

Leukaemia is a group of cancers that originate in the bone marrow and lead to an overproduction of abnormal white blood cells. Diagnosis often relies on microscopic examination (with flow cytometry or genetic testing for confirmation), which can be resource-intensive and time-consuming. Automating WBC classification offers a promising alternative, especially for under-resourced laboratories, yet robust detection of blast and rare cells in blood smear images requires further validation and optimization.

This challenge evaluates algorithms on single-site images with standardized staining and acquisition. To replicate real-world domain shift, we synthesize scanner and settings variability (e.g., noise and blur). The held-out test set enforces patient-level separation; train/validation use group-stratified splits to preserve minority coverage.

Key Facts

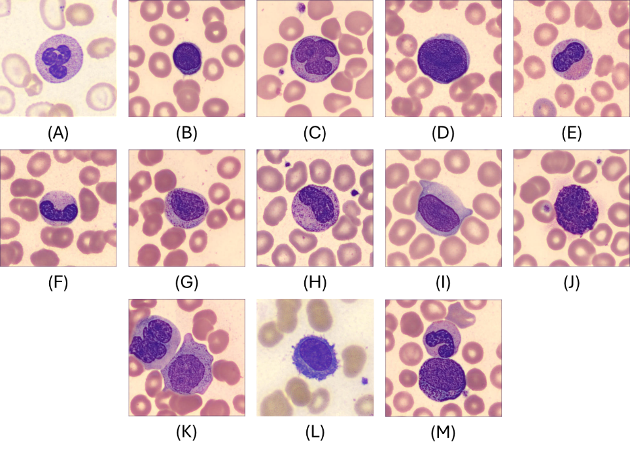

- 13 WBC classes; expert hematopathologist annotations

- Severe class imbalance & fine-grained morphology

- Standardized submission schema & open evaluator

- Main metric: macro-averaged F1

Challenge Overview Paper

If you use the WBCBench 2026 dataset, evaluator, or results in your work, please cite our challenge overview paper:

WBCBench 2026: A Challenge for Robust White Blood Cell Classification Under Class Imbalance and Domain Shift

Xin Tian, Xudong Ma, Tianqi Yang, Alin Achim, Bartłomiej W. Papież, Phandee Watanaboonyongcharoen, Nantheera Anantrasirichai

In Proc. 2026 IEEE International Symposium on Biomedical Imaging (ISBI), IEEE, 2026.

BibTeX

@inproceedings{wbcbench2026,

author = {Tian, Xin and Ma, Xudong and Yang, Tianqi and

Achim, Alin and Papie\.{z}, Bart{\l}omiej W. and

Watanaboonyongcharoen, Phandee and

Anantrasirichai, Nantheera},

title = {{WBCBench 2026}: A Challenge for Robust White Blood Cell

Classification Under Class Imbalance and Domain Shift},

booktitle = {2026 IEEE International Symposium on Biomedical Imaging (ISBI)},

year = {2026},

publisher = {IEEE},

}

Goals & Objectives

G1 — Comparability & Reproducibility

Standardized splits and an open evaluator with a fixed submission format.

G2 — Rare-Class Reliability

Prioritize macro-F1 and require class-wise reporting to highlight minority performance.

G3 — Practical Impact

Provide baselines and a clear submission workflow; publish a concise post-challenge summary and recommended practices.

Important Dates

-

November 19 — Training Dataset Phase 1 (15% of patients) Released

phase1_train – 74 patients, 8,288 images. This clean, well-curated subset is available on Kaggle for download and serves as the initial training set and sanity-check benchmark for participants. -

November 26 — Training Dataset Phase 2 (remaining 85% of patients) Released with Evaluation Set

phase2_train (45%) – 222 patients, 24,897 images

phase2_eval (10%) – 49 patients, 5,350 images

phase2_test (30%) – 148 patients, 16,477 images (not released; used internally for leaderboard ranking)

Teams can begin submitting results for leaderboard evaluation from this point. -

February 6 — Early Code + Weights Submission Deadline (Top 10 Teams)

For reproducibility checking, we will contact teams provisionally ranked in the top 10 by email three days prior to this deadline and request them to submit their code and model weights. -

February 26 — Paper Submission Deadline (4 pages paper in IEEE ISBI format, EDAS, Challenge Track) & Competition Closes

Submit your challenge paper on the ISBI 2026 EDAS platform under the Challenge Track. On this date, the Kaggle submission portal closes and no new submissions are accepted. We will validate the submitted code/models to confirm the final leaderboard. -

March 1 — Reviews Released on EDAS

Authors receive reviews and have two weeks to address comments and make minor updates to the manuscript or method description. -

March 15 — Camera-ready Deadline & Top-performing Teams Announced

Upload the final paper on EDAS. Meanwhile, the preliminary top-ranking teams will be announced on our website. -

March 20 — Presentation Format Notification

Authors are informed whether their presentation is oral or poster. -

April 8–11 — Winners Announced

Final results and award announcements during ISBI 2026.

Final dates follow the official ISBI 2026 schedule. Please refer to the ISBI Challenge page for any updates: https://biomedicalimaging.org/2026/challenges/

Team Eligibility

- Open to academia, industry, and independent teams (subject to local laws/sanctions).

- One registered team per method entry; overlapping membership must be declared.

- Organizers' institutes may participate and appear on the leaderboard, but are ineligible for prizes.

- External public data/pretrained models allowed with disclosure; private/undisclosed data disallowed.

- Ethical use only: no attempts to re-identify subjects or circumvent de-identification.

Registration & Access

Registration is now closed. The WBCBench 2026 challenge concluded at ISBI 2026 in London, UK on April 11, 2026.

Thank you to the 241 registered teams from academia, industry, and independent research worldwide who participated. The Kaggle competition page remains available for browsing the leaderboard and reviewing the challenge protocol.

Dataset

The dataset comprises 55,012 peripheral blood smear images across 13 WBC classes. Images are provided as 368×368 px JPEG files and were annotated by expert hematopathologists. The dataset is highly imbalanced, reflecting real-world prevalence: common classes (e.g., segmented neutrophils) dominate, while rare leukocytes appear only a few times.

The dataset was released to participants via a dedicated Kaggle link during the challenge (training/validation downloadable; test set hidden for fair evaluation). Licensing follows an appropriate open research license (e.g., CC BY-NC-SA 4.0) to encourage non-commercial research and reproducibility.

Post-challenge update: We are finalising the dataset for a public release on Hugging Face. The link will be posted here once preparation is complete — stay tuned.

Figure 1 shows representative examples of the 13 WBC categories.

Evaluation

Ranking Rules

- Primary metric: macro-averaged F1 across all classes.

- If tied, use balanced accuracy; if still tied, use macro-averaged precision.

- If still tied, use macro-averaged specificity.

- If all above tie, final ranking will be determined by inference time (verified by organizers).

Competition Rules

- On Kaggle, each team is allowed a maximum of 10 submissions per day for leaderboard evaluation.

- Top 5 teams will be asked to submit executable code and trained models for verification.

A fixed submission schema and open evaluator are provided to ensure fair, reproducible benchmarking.

Awards & Winners

Winners were announced at the WBCBench 2026 Challenge Session, ISBI 2026, London, UK on April 11, 2026. From 241 registered teams and 101 valid submissions, 7 teams achieved macro-F1 > 0.70 and the top team outperformed the ResNet-50 baseline by +14.2 macro-F1 points. Congratulations to all participants!

🏆 First Prize — $800

Team FDVTS_WBC · Macro-F1: 0.777

Fan Xiao, Zirui Chen, Jilan Xu, Junlin Hou — Fudan University · Nanjing Agricultural University · University of Oxford · HKUST

Foundation Model Enhanced Hierarchical Learning for White Blood Cell Classification

Method: Hierarchical ensemble of DinoBloom + DINOv3 foundation models, dedicated PLY binary classifier with exemplar matching, and pseudo-labelling self-training.

🥈 Second Prize — $600

Team PathMedAI · Macro-F1: 0.771

Duc T. Nguyen, Hoang-Long Nguyen, Huy-Hieu Pham — VinUniversity, Hanoi, Vietnam (College of Engineering & Computer Science · VinUni-Illinois Smart Health Center · Computer Vision and Medical AI Lab)

Synergizing Deep Learning and Biological Heuristics for Extreme Long-Tail White Blood Cell Classification

Method: 5-fold Swin-Small ensemble with MedSigLIP contrastive head, Pix2Pix GAN denoising, and geometric heuristic filters.

🥉 Third Prize — $500

Team AIO-MHIL · Macro-F1: 0.708

Luu Le, Hoang-Loc Cao, Ha-Hieu Pham, Thanh-Huy Nguyen, Ulas Bagci — University of Technology HCMC · University of Science HCMC · VinUniversity · Carnegie Mellon University · Northwestern University

Robust White Blood Cell Classification with Stain-Normalized Decoupled Learning and Ensembling

Method: Two-stage decoupled training with ResNet-50/152 ensemble, Macenko stain normalisation, and 8× test-time augmentation.

📝 Best Paper Award — $100

Team PathMedAI

Duc T. Nguyen, Hoang-Long Nguyen, Huy-Hieu Pham — VinUniversity, Hanoi, Vietnam

Synergizing Deep Learning and Biological Heuristics for Extreme Long-Tail White Blood Cell Classification

Other Accepted Papers

-

Multi-Stage Fine-Tuning of Pathology Foundation Models with Head-Diverse Ensembling for White Blood Cell Classification

Antony Gitau, Martin Paulson, Bjørn-Jostein Singstad, Karl Thomas Hjelmervik, Ola Marius Lysaker, Veralia Gabriela Sanchez — University of South-Eastern Norway · Vestfold Hospital Trust -

A Hierarchical Ensemble Inference Pipeline for Robust White Blood Cell Classification Under Domain Shifts

Tingkwong Ng, Ruyi Dai, Hao Chen — The Hong Kong University of Science and Technology -

Ensemble of Small Classifiers for Imbalanced White Blood Cell Classification

Siddharth Srivastava, Adam Smith, Scott Brooks, Jack Bacon, Till Bretschneider — University of Warwick · Intelligent Imaging Innovations Ltd

Organizers

- Nantheera Anantrasirichai, University of Bristol, UK

- Alin Achim, University of Bristol, UK

- Bartek Papiez, University of Oxford, UK

- Xudong Ma, University of Oxford, UK

- Xin Tian, University of Oxford, UK

- Tianqi Yang, University College London, UK

- Phandee Watanaboonyongcharoen, Chulalongkorn University, Thailand

Annotation team (Laboratory Medicine, Chulalongkorn University): Phandee Watanaboonyongcharoen (Lead), Kwanlada Chaiwong, Manissara Yeekaday, Rujira Naksith, Suppakorn Wongkamchai, Jirapa Kaewkhruawan, Luksamon Tipsuriya, Wararat Masalae, Waroonkarn Laiklang, Pitchayaporn Riyagoon, Sunudda Nowaratsopon, Tapakorn Thepnarin, Winyanan Nunphuak.

Contact

- Xin Tian — xin.tian@well.ox.ac.uk — University of Oxford

- Xudong Ma — xudong.ma@ndph.ox.ac.uk — University of Oxford

- Tianqi Yang — tianqi.yang@ucl.ac.uk — University College London

- Nantheera Anantrasirichai — N.Anantrasirichai@bristol.ac.uk — University of Bristol

Organizers & Partners